First, all credit goes to Mark Felder’s blog post upon which this is based.

You can buy an Apple Time Capsule (I did) to back up your Mac. Now that I have two MacBook’s, I have run out of space, so now I want to backup to ZFS.

By backing up to my ZFS filesystem:

- I am no longer constrained to the capacity of a single disk

- I can backup my backups to tape

- I have the checksums of ZFS

- I can separate different devices into different ZFS filesystems

What’s not to like about that?

Access from a different subnet

IMPORTANT If you are on a VPN or are likely to be, you may want to read Accessing your Time Capsule when on a different subnet first.

Your first backup should be done on a wired network if possible. It will be faster.

The install

pkg install netatalk3 avahi-app

The filesystem

zfs create tank_data/time_capsule

I also imposed a 1T quota on this. Without that, the backups would continue to grow and old data would never be removed.

The configuration

/usr/local/etc/afp.conf

[Global] vol preset = default_for_all_vol log file = /var/log/netatalk.log # air01 dent dent air01 hosts allow = 10.3.3.90 10.3.3.60 10.5.5.60 10.5.5.61 mimic model = TimeCapsule6,116 [default_for_all_vol] cnid scheme = dbd ea = ad [air01] path = /tank_data/time_capsule/air01 valid users = air01 time machine = yes [dent] path = /tank_data/time_capsule/dent valid users = dent time machine = yes

NOTES:

Line 6: the hosts you want to allow connecting. I have a bunch of hardcoded IP addresses, both for wired and wireless. There are two hosts I am allowing to backup.

Line 13: air01 is the device name which will show up in the list of devices you can backup to. This is also the hostname of my laptop.

Line 14: The path where the backups will go. I have not yet created that directory.

Line 15: The FreeBSD user name which I will use to authenticate. This must be the owner of the directory from line 14.

Lines 18-20: same as the section above, but for the laptop called dent, not air01.

/usr/local/etc/avahi/services/afp.service:

<?xml version="1.0" standalone='no'?><!--*-nxml-*--> <!DOCTYPE service-group SYSTEM "avahi-service.dtd"> <service-group> <name replace-wildcards="yes">%h</name> <service> <type>_afpovertcp._tcp</type> <port>548</port> </service> </service-group>

There is nothing to customize here.

/etc/rc.conf:

# time machine dbus_enable="YES" netatalk_enable="YES" cnid_metad_enable="YES" avahi_daemon_enable="YES"

2018-07-26 NOTE: afpd does not need to be enabled. It is started/stopped by netatalk.

Creating users

I wanted to use new specific users for these backups. This is optional. I ran adduser for that.

Creating directories

EDIT: 2023-03-02 Originaly, I created directories, as shown below. Later, I created separate file systems for each user. This comes back to my mantra: when you think mkdir, instead think zfs create. Case in point, tonight I did this:

[knew dan ~] % sudo zfs create system/data/time_capsule/dvl-pro03 [knew dan ~] % zfs list -r system/data/time_capsule NAME USED AVAIL REFER MOUNTPOINT system/data/time_capsule 2.96T 19.3T 219K /data/time_capsule system/data/time_capsule/dvl-air01 1.01T 260G 940G /data/time_capsule/dvl-air01 system/data/time_capsule/dvl-dent 625G 575G 625G /data/time_capsule/dvl-dent system/data/time_capsule/dvl-dent-sparse 167G 153G 167G /data/time_capsule/dvl-dent-sparse system/data/time_capsule/dvl-pro02 1.17T 375G 825G /data/time_capsule/dvl-pro02 system/data/time_capsule/dvl-pro03 219K 19.3T 219K /data/time_capsule/dvl-pro03

Now back to the original notes:

Here, I create the directories to be used by these two backups:

cd /tank_data/time_capsule/ mkdir air01 dent chown air01:air01 air01 chown dent:dent dent

Starting stuff

Here’s what you need to start:

service dbus start service netatalk startservice afpd startservice avahi-daemon start

2018-06-26 NOTE: This post originally included a start of the afpd service. Either I was wrong then, or things have changed. There is no starting of afpd. Rather, it stops and starts via netatalk.

That’s it

It should just work.

NOTE: you need to be on the same network for configuration, because of the broadcasts which your laptop will pickup. After that, you can be on another subnet (e.g. on your VPN).

Encrypted Backups

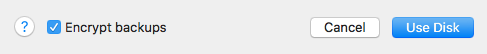

I use encrypted backups for this, because it is so easy to configure. Remember to check that box before you click Use Disk, otherwise, you have missed your opportunity.

Bonus

Mark mentioned that from time to time, the backups may get corrupted. If that’s the case, and you have a snapshot regime in place, you can rollback the snapshot to a known good location and start again.

Notes

I could have created two zfs filesystems, one for each user (i.e. laptop). I could then impose different quotas on those two users.

After running overnight, this is what I see:

$ zfs list tank_data/time_capsule NAME USED AVAIL REFER MOUNTPOINT tank_data/time_capsule 239G 785G 239G /tank_data/time_capsule